LIFESTYLE

NativePHP: Revolutionizing Desktop Applications with PHP Framework

What is NativePHP? We are going to explain in this article. The realm of programming and application development is constantly evolving, continually redefining boundaries and …

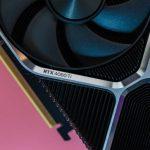

Read moreNvidia’s RTX 4060 Anticipated to Launch Ahead of Schedule

The upcoming Nvidia RTX 4060 may be hitting the market earlier than anticipated, as against previous estimates of a July launch. Given its less impressive …

Read moreSubreddit Blackout Causes Reddit to Experience Significant Crash

Reddit experienced significant technical difficulties on Monday, coinciding with a major protest by thousands of subreddits against the website’s newly introduced API pricing terms. The …

Read moreEntertainment

Community

NativePHP: Revolutionizing Desktop Applications with PHP Framework

What is NativePHP? We are going to explain in this article. The realm of programming and application development is constantly evolving, continually redefining boundaries and …